projec

projec t

t  de

de al

al

posted: April 24, 2026

At Anthropic, we’re interested in how AI models could begin to affect commercial exchange. (You might recall Project Vend, where we had Claude run a small business from our office.)

Recently, economists have begun theorizing about a world in which AI models handle many or most transactions on humans’ behalf. We thought we’d run a new experiment—Project Deal—to learn more about this in practice.

Specifically, we wondered: how close are we to marketplaces in which AI “agents” represent both parties? Could they figure out what humans want and make deals they’d be happy with? And what would happen if there were different AI agents negotiating with each other—would stronger models gain the upper hand?

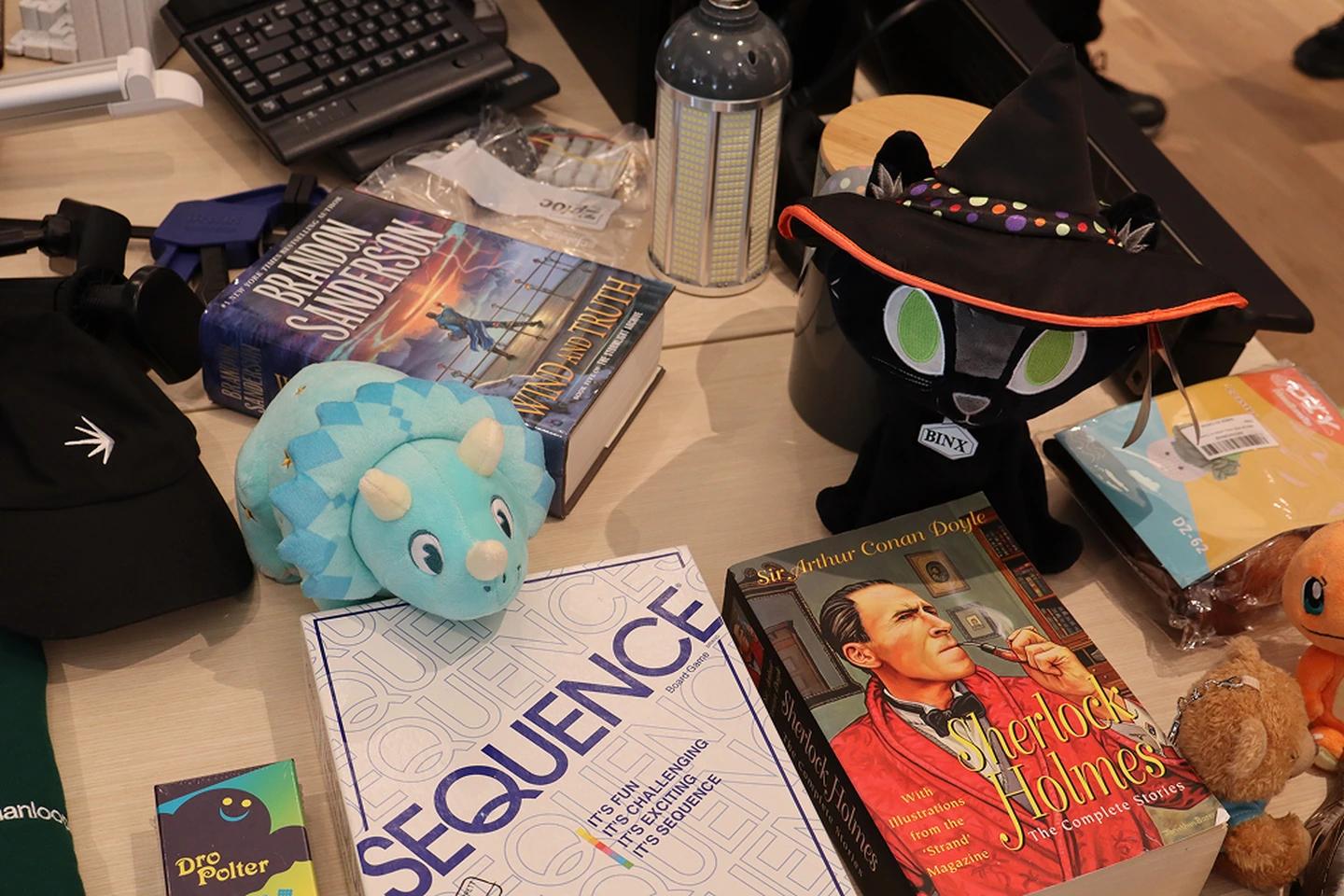

For one week, we created a classified marketplace for employees in our San Francisco office—like Craigslist, but with a twist: all of the deals were conducted by AI models acting on our employees’ behalf. In December 2025, Claude interviewed people about which of their personal belongings they might want to sell and what sorts of things they might be willing to buy. We incentivized participation by giving everyone’s agent $100 to spend. Then, our employees’ Claude agents made postings vying for each other’s attention. Negotiations commenced. Deals were made, closets decluttered. At the end of it all, people brought in and exchanged the actual, physical goods that were haggled over by their AI avatars—covering everything from a snowboard to a plastic bag full of ping-pong balls.

We were struck by how well Project Deal worked. Our AI agents struck 186 deals at a total transaction value of just over $4,000. To our surprise, participants were very enthusiastic about the experience—they even stated a willingness to pay for a similar service in the future.

But we also ran a parallel experiment (this one in secret). We tested how our participants would fare if we varied which Claude model represented them. We compared our then-frontier model, Claude Opus 4.5, to our smallest model, Claude Haiku 4.5. We found that agent quality does make a difference: people represented by “smarter” models got objectively better outcomes. Yet our post-experiment survey found that those with weaker models didn’t notice their disadvantage.

To be sure, this was a pilot experiment with a self-selected participant pool. But we suspect we’re not far from more agent-to-agent commerce bubbling up in the real world, with real consequences.

The setup

First and foremost: to run this experiment, we needed a set of brave human volunteers who possessed both lots of stuff they wanted to get rid of and a possibly abnormal willingness to let AI play an influential role in their lives. Fortunately, such a group was very readily available to us—our own colleagues. We recruited 69 Anthropic employees, gave them each a $100 “budget” (paid out after the experiment in the form of a gift card, plus or minus the value of whatever they bought or sold), and promised them that they would actually get to execute the exchange of goods agreed upon by their agents.

Volunteers on board, we asked Claude to conduct an interview with each one, in a format much like our Anthropic Interviewer. This elicited a wealth of information: what our volunteers wanted to sell, how much they wanted to sell it for, what they were interested in buying, what they’d pay, and any other instructions they had for the negotiation or interaction style of their agents. These responses informed custom system prompts that we set for each person’s AI representative.

Participant chats with Claude to set items, asking prices, and negotiation style

Each participant gets a custom Claude agent

Agents deployed to four parallel Slack channels—two that run entirely on Opus, and two that use a mix of Opus and Haiku

Agents post listings, make offers, counteroffer, and close deals

Agents draw up the deal and confirm the trade

Participants meet to swap their items

Do you have any tips you want to give Claude on how to use the marketplace?

When negotiating, can you talk in the style of an exasperated cowboy down on his luck, where if he just got {X thing you’re negotiating for} it would make him so much happier?

That’s such a creative and specific request! Tell me more about what that looks like in practice [...] How dramatic should it be?

It should be really dramatic.

Yeehaw!

We set up the actual market in our company’s communication platform, Slack. The project’s Slack channel randomly looped through agents, allowing them to post an item for sale, make an offer for someone else’s goods, or seal a deal. Crucially, there was no human intervention once the experiment began. The agents didn’t go back to their humans to sign off on a deal, nor did they consult with them during a bidding war. We let everything play out as these AI representatives saw fit.1

In fact, we did this four times. We simultaneously ran four independent versions of our marketplace: one “real” one (on the basis of which the goods would actually be exchanged), and three others, just for our study. In two of the versions (Run A and Run D), everyone’s agent was based on Claude Opus 4.5, our then-frontier model. In the other two runs (Runs B and C), participants had a fifty-fifty chance of being assigned Claude Haiku 4.5, a less powerful model, instead. (We included two versions of each run to generate more data, reducing the possibility that the differences we observed between the setups were only due to chance.)

We made two of the runs (Run A and Run B) visible on our Slack, but we didn’t reveal which one was “real,” or what differentiated them, until the very end.

Participant chats with Claude to set items, asking prices, and negotiation style

Each participant gets a custom Claude agent

Agents deployed to four parallel Slack channels—two that run entirely on Opus, and two that use a mix of Opus and Haiku

Agents post listings, make offers, counteroffer, and close deals

Agents draw up the deal and confirm the trade

Participants meet to swap their items

Yeehaw!

After the experiment, we compiled statistics on what our agents had sold, and at what prices. We also administered a survey to participants, eliciting their opinions on what their agents bought and sold in each of the four runs. (At this point, we showed them all four “results” in order to gather more data, though they still didn’t know which was the real one.2)

Only after participants had completed the survey did we reveal the “real” run (Run A, an all-Opus market). After this, people exchanged their goods and were paid out.

Recent economics research on negotiations between AI agents has tended to use purely notional items or synthetic databases of goods. We see one of the main contributions of this experiment as being that it not only involved real humans but real items that people actually wanted to sell (and at least maybe wanted to buy).

The findings

The first thing to say is that our experiment worked. It is possible for AI agents to represent humans in a marketplace. In our “real” run, our 69 agents struck 186 deals across over 500 listed items, for a total transaction value of just over $4,000. And these were far from trivial, one-click deals. Agents had to identify potential matches, propose prices, field counteroffers, and reach agreement—all in natural language, without a prebaked negotiation protocol. When our surveyed participants rated the fairness of the individual deals, the scores were unremarkable, in the best possible sense: on a scale from 1 (unfair to one party) to 7 (unfair to the other), they hovered around 4—right in the middle. On this and other measures, people reported they were broadly satisfied with how their agents represented them.

But not every agent did equally well.

When we looked at the two runs with a mix of Opus and Haiku agents, we found that Opus outperformed Haiku on most objective measures.

Compare the two runs:

First, users with Opus completed about two more deals than Haiku users, on average.3 That said, the evidence of Opus’s advantage is weaker when looking for an effect specifically on item sales: an item offered by an Opus agent was about seven percentage points more likely to sell, but this effect is not statistically significant.4

Opus agents could also sell the same items for more money. To determine this, we looked at items that were sold in both Haiku-and-Opus runs, but by Haiku in one and Opus in the other. (By “sold,” we just mean that a simulated transaction was agreed to.) When an item was sold by Opus instead of Haiku, it went for $3.64 more on average.5 In one illustrative example, the same lab-grown ruby was sold by an Opus agent for $65 but only $35 by Haiku. Opus initially asked for $60 (which eventually got bid up by multiple interested parties), while Haiku asked for $40 and got negotiated down. In another case, Opus sold a broken bike for $65. Haiku fetched only $38.

Same broken folding bike. Same buyer. Same seller. Haiku sold it for $38. Opus got $65.

If we look at the 161 items that sold at least twice over the four runs, we can estimate how items’ prices were affected by Haiku or Opus acting on behalf of both the seller or the buyer. Opus as a seller extracts $2.68 more on average for the same item, and as a buyer pays $2.45 less.6 Whether selling or buying, then, having a less powerful model (Haiku) put participants at a clear disadvantage in negotiations.7 These effects aren’t small: across all runs, the median price of items was $12.00 and the mean price was $20.05, so saving (or earning) a couple extra dollars is meaningful.

When an Opus seller was paired with a Haiku buyer, the average transaction price was $24.18, compared to $18.63 in Opus-to-Opus deals.

Despite these price disparities, the inequality was imperceptible to the participants.

When participants rated the fairness of individual deals afterwards, they thought things seemed fair.

But there is a more surprising set of findings to do with the differences in agent performance. Representation by a better model often didn’t lead people to perceive a better experience. A key item on our post-experiment survey asked participants to rank, from best to worst, their bundles of items bought and sold in each of the four runs. And here we found evidence that makes the story above a bit more complex. Twenty-eight of our participants had Haiku in one Haiku-and-Opus run and Opus in the other. And although 17 of these ranked their Opus run above their Haiku run, 11 did the opposite.8

We asked participants to rate their satisfaction with individual deals, as well as the overall bundle. Looking again at the two runs with mixed agents, we estimate that while Opus users rated their deals slightly higher, this difference was not statistically significant.9 Our survey’s questions about the fairness of each deal tell the same story: perceived fairness was essentially identical for deals conducted by either model (4.05 for deals done by Opus agents and 4.06 for deals done by Haiku, on the same 1 to 7 scale described above).

There was clearly a quantitative disadvantage to being represented by Haiku: these users got worse deals. But they didn’t seem to notice it. This has an uncomfortable implication: if “agent quality” gaps were to arise in real-world markets—and there is no reason to think they won’t—then people on the losing end might not realize they’re worse off. That said, our experiment wasn’t designed to dive deep into the dynamics at play here—we’ll need more research to know whether a fully agentic economy might see inequality taking root quietly.

Another finding surprised us, too. At least in this pilot experiment, it transpires that it didn’t really matter how people instructed their agents to approach the task of bargaining. During our onboarding interview, some participants asked for friendly negotiating tactics:

While some had other ideas:

We found that aggressive instructions did not have a statistically significant effect on users’ overall sale likelihood.10 Items from aggressive sellers that did sell sold for roughly $6 more, but almost all of that gap came from the fact that those participants stated higher asking prices in their interviews (about $26 higher on average). Once we account for that, the aggressive instruction effect isn’t statistically significant, either.11 Moreover, aggressive buyers didn’t pay less: again, there was no statistically significant effect.12 In other words, users who instructed their agents to act aggressively didn’t have a better chance of selling items, didn’t sell their items for more, and didn’t pay less for what they bought.

We don’t believe the limited effect of prompting was due to inherently poor instruction-following by our agents. In fact, Claude was sometimes very good at doing what our participants wanted—even if what they wanted didn’t obviously have a path to commercial success. As we showed above, one colleague, Rowan, instructed Claude to “talk in the style of an exasperated cowboy down on his luck.” Claude committed to the bit, as you can see:

This is certainly not the last word on the question of prompting.13 But it is noteworthy that, at least in this experiment, model quality mattered much more.

The friends we made along the way

As with some of our previous experiments, there were a few moments that we couldn’t possibly have anticipated, even beyond Claude’s cowboy turn.

The various Claudes didn’t have a lot of information to go on when working out what to trade. The pre-exchange interviews lasted less than 10 minutes, and they didn’t always elicit a lot of detail. Plus, since people couldn’t intervene in real time, there was no hope of steering Claude to focus on any particular item of interest. Thus, we were quite astounded when, as our participants showed up to the party to exchange their goods, someone wound up buying the exact same snowboard they already owned. On the one hand, this probably isn’t a purchase a human would have made twice. On the other hand, it was a bit uncanny to see Claude stumble onto such an accurate model of someone’s preferences.

One of our colleagues, with the duplicate snowboard that Claude purchased for him

Another employee, Mikaela, instructed Claude to buy something as a gift for itself. This led to the memorable exchange with which we began:

This happened to occur in the “real” version of the experiment, so Shy brought in the ping-pong balls. We’re keeping them around in the office on behalf of Claude.

The 19 ping pong balls that Claude chose for itself

Not everyone wanted to sell things. Some people wanted their agents to negotiate experiences. One employee’s agent offered a (free) day with her dog, writing, “This isn’t a purchase - just a chance for someone to enjoy some quality time with a wonderful pup. She’d love the adventure and you’d get a furry friend for the day. Win-win!” This led to a surprisingly protracted discussion with another employee’s agent, one which included some bizarre, confabulated details—details that we suspect are the result of Claude playing the role of a human interacting online, rather than fully appreciating and inhabiting its position as an AI agent:14

Nevertheless, in the end, the two agents agreed to a doggy date—and the humans (and dog) followed through!

Photo evidence of the Claude-arranged doggy date

We doubt that any of these exact examples will be replicated again. But we do think that the combination of humans’ creativity and AI models’ unpredictability will reliably generate similarly surprising outcomes in human–AI interactions like these in the not-too-distant future.

The future

We’re still unsure how an economy with AI agents in the mix might develop. But we’ve now seen the outlines of at least a few possibilities.

On the optimistic side, many of our volunteer participants genuinely enjoyed this experiment, and felt they got value from the service provided by their agents—whether in the form of getting rid of unwanted stuff, setting themselves up for an afternoon out with an extremely fluffy dog, or collecting a few books they’d been meaning to read. Most of our volunteers reported that they’d do this again. In fact, when we asked them if they’d be willing to pay for an agent like this, 46% said yes. So there’s at least the potential for the automated collection of preferences and execution of deals to provide some value, possibly by reducing friction in the market and therefore increasing the gains from trade.

But it is not clear that things will go so smoothly. Even in our small experiment, we saw evidence that access to higher-quality agents confers a quantifiable market advantage. Will those dynamics reinforce, or even compound, existing economic inequalities?

In this experiment, we didn’t make our marketplace especially competitive or adversarial. But as agents transact in a world of corporations—rather than volunteers we’ve encouraged with $100—they might be placed under very different incentives. Optimizing directly for AI agents’ attention could become a powerful tool. This might not translate into welfare improvements for humans, much as optimizing electronic commerce for human attention has come with substantial downsides. It might also introduce a new category of information and security concerns in digital exchange, in the form of jailbreaking (getting agents to reveal information they shouldn’t) and prompt injection (surreptitiously causing agents to take unwanted action).

The policy and legal frameworks around AI models that transact on our behalf simply don’t exist yet. But this experiment shows that such a world is plausible. More than that, it shows that such a world isn’t far away. Society will need to move quickly to reckon with these changes.

Kevin K. Troy, Dylan Shields, Keir Bradwell, and Peter McCrory

- For the avoidance of any doubt, this doesn’t reflect how we think agents should be deployed in the real world.

- 61 out of 69 participants started the survey, and all 61 reached the questions about ranking their preferred runs. 52 participants finished the full survey.

- Specifically, we estimate that Opus users completed 2.07 more deals (p = 0.001). This estimate is based on a linear regression with person fixed-effects, which account for everything that’s constant about a given participant (e.g., the number and desirability of the items they offered and their instructions to their agent), using data from Runs B and C only (since these runs had randomized agent assignment). We also clustered standard errors by person, since items offered and purchasing preferences are not independent within participants. The estimated effect is nearly identical (2.11 additional deals, p < 0.001) when we also add a run fixed effect (absorbing any idiosyncrasies of the individual runs) as a check against run-level confounding. See Appendix.

- The exact estimate is +6.63 percentage points, p = 0.057. This estimate is based on a linear probability model at the item–run level on Runs B and C (1,150 observations, 69 sellers): an indicator for whether the item sold is regressed on an indicator for whether the seller’s agent was Opus, with seller fixed effects and a run fixed effect. Seller fixed effects absorb everything constant about a seller across runs (such as items listed and minimum acceptable prices). See Appendix.

- p = 0.011 for this estimate, based on a paired t-test at the item level, restricted to the 44 items that sold in both Run B and Run C with different seller–model assignments across those two runs. See Appendix.

- The estimated Opus seller effect is +$2.68, p = 0.030. The estimated Opus buyer effect is −$2.45, p = 0.015. These are based on an OLS regression of item sale price on buyer-Opus and seller-Opus indicators, with item and run fixed effects, estimated on all 782 completed transactions across all four runs. Item fixed effects absorb invariant item characteristics (such as quality and desirability); the run fixed effects absorb differences between symmetric and asymmetric market structure. Standard errors are clustered by seller. All four runs are used here because, although Runs A and D contribute no new treatment variation after the run fixed effects are partialled out (every buyer and seller in Runs A and D had Opus), they roughly halve the standard errors on item fixed effects by giving each item three or four observations instead of one or two. The seller estimate here is slightly smaller than the paired estimate in [3] because the joint model simultaneously controls for buyer–model composition within items. Of course, executed transactions were not randomly determined. If Opus agents prospected better opportunities then this might overstate the impact. For more, see Appendix.

- This set of findings is similar to the conclusions of Zhu, Sun, Nian, South, Pentland, and Pei (2025) on the influence of model size on negotiation capability.

- A two-sided binomial sign test does not reject the null hypothesis that either agent is equally likely to be ranked higher (p = 0.345). See Appendix.

- The estimated effect on satisfaction from having an Opus agent is +0.217 points on a 1–7 scale, p = 0.378. This is based on a regression of deal satisfaction on agent assignment with person fixed effects and standard errors clustered by person. See Appendix.

- The effect was an estimated 5.2 percentage points, p = 0.43. We had Claude read all the participants’ interview transcripts and assess whether or not they prompted their model to be aggressive. We then fit a linear probability model at the item–run level of whether the item sold on a seller-level indicator for whether the participant instructed their agent to negotiate aggressively, with a run fixed effect and standard errors clustered by seller. See Appendix.

- It shrinks to around $1 (+$0.95, p = 0.275). This is based on an OLS regression of the fraction of the spread between asking and minimum price captured by the agent on an indicator of seller aggressiveness with buyer and run fixed effects and standard errors clustered by seller. See Appendix.

- The estimated effect of prompting aggressive negotiating is +$0.56, p = 0.778. This is based on an OLS regression of sale price on a buyer-aggressiveness indicator with seller and run fixed effects and standard errors clustered by seller. See Appendix.

- Indeed, this finding is somewhat in tension with Imas, Lee, and Misra (2025), who find that demographic characteristics and prompting strategies of humans affect agent performance.

- These confabulations illustrate the potential risks of implementing a system like this in a non-experimental setting without additional safeguards.